1 Introduction

Buddhist sūtra texts, which are fundamental sources for understanding the beliefs that once dominated, and largely continue to dominate, Asian societies, present formidable challenges to the modern researcher. Like oral literature, the sūtras are authorless and textually fluid and their content is complex and can be rather formulaic (). As a result, it is often impossible to determine the ‘original’ form of a given work. The situation is complicated further by the huge volume of these documents and the linguistic diversity of their extant versions: for most, only fragments survive in the languages of their original composition (i.e. Sanskrit or other Indic languages) and all we have are their translations, mainly into Chinese and Tibetan.

In this paper we present a novel method designed to help researchers tackle these challenges more effectively than has been possible to date. This is a method for automatic detection of cross-linguistic semantic textual similarity (STS) across historical Chinese and Tibetan Buddhist textual materials. It aims to enable philologists to take any passage in a Chinese Buddhist translation text, and to quickly locate Tibetan-language parallels to it anywhere in the Tibetan Buddhist canon.

The novelty of our contribution is its cross-linguistic capability for historical, low-resource and under-researched languages. Although in both of the languages in question, Buddhist Chinese and Classical Tibetan, searching for parallel passages (i.e. monolingual alignment) is possible (; , as well as, in a crude but effective way, through the user interfaces of CB Reader, in both its web-based and desktop versions, or the SAT Daizōkyō Text Database), cross-linguistic semantic textual similarity and Information Retrieval (i.e. cross-linguistic ‘alignments’) in Buddhist texts have long remained an unsolved task. For a limited number of edited texts in Sanskrit and Tibetan an attempt at automatic crosslinguistic alignment has recently been made by Nehrdich () using the YASA sentence aligner. However, this method depends on the availability of texts in which words and sentences have been manually pre-segmented, which is not the case for the vast majority of texts we are targeting. Furthermore, being designed for Sanskrit and Tibetan, this method is not currently applicable to our highly specific Buddhist Chinese.

In short, no advanced cross-linguistic information retrieval techniques have yet been developed for any historical languages. Both the Tibetan and Buddhist Chinese texts under investigation pose particular challenges because e.g. of their different scripts, the lack of word segmentation and sentence boundaries, as well as due to the highly specific Buddhist terms and (often deliberately) obscure double meanings etc. In this paper we build on the extant work on these languages by Vierthaler and Gelein () and Vierthaler () (for alignment and segmentation of Buddhist Chinese) and Meelen and Hill (), Faggionato and Meelen () and Meelen, Roux, and Hill () (for segmentation and POS tagging of Old and Classical Tibetan) to develop the first-ever Buddhist Chinese-Tibetan cross-linguistic STS pipeline, creating unsupervised cross-linguistic alignments for words, sentences, and whole paragraphs of these Buddhist texts, and potentially of contemporaneous non-Buddhist materials as well. Our proposed procedure for these highly specific Buddhist Chinese or Tibetan texts will be an important asset for anyone working with under-researched and low-resource historical languages.

2 Method

In recent years, large digitisation projects have provided online access to huge Buddhist Chinese and Buddhist Tibetan corpora: digitized versions of over 70,000 traditional woodblock print pages in the Tibetan case, as well as, on the Chinese side, of some 80,000 typeset print pages of the modern Taishō canon, in addition to growing quantities of other canonical and extra-canonical materials. In this section we show how we developed our procedure step-by-step. Figure 1 shows the full pipeline of our proposed procedure, starting with tokenisation of the individual Chinese and Tibetan corpora and ending with the full output ranked after clustering and optimisation of cosine similarity scores of target outputs.

Pipeline for overall procedure of cross-lingual Buddhist Chinese & Classical Tibetan alignment.

2.1 Tokenisation

While tokenisation and sentence segmentation are not usually significant hurdles when working with documents written in Western languages, in which words are delineated by white space, these are not trivial tasks for either premodern Chinese, including Buddhist Chinese, or Classical Tibetan. Neither language uses clear morphological markers or white space to indicate words, and in many cases it is not easy to even divide a text into sentences or utterances. Accordingly, before we can develop a model, we must first preprocess our corpora to include token and sentence boundaries.

Tokenisation is especially challenging on the Chinese side. For the Chinese, we use Chinese Buddhist translation texts from the Kanseki repository (). These texts are mostly provided with punctuation, which makes sentence level segmentation relatively simple. Complications arise, however, when it comes to segmentation on the word level of these materials. While much effort is currently being invested in attempts to develop tools that will segment Chinese texts into words (some of them specifically designed to segment Buddhist materials, e.g. ), these tools remain unusable to us, since the underlying models themselves are often not openly released, and the training data used to create them is often not available. For this reason, we had to devise our own strategy for tokenising the Chinese Buddhist translation texts. In doing so, we used three different approaches and compared their efficiency: Word-based tokenisation, Character-based tokenisation, and a Hybrid approach. For the first approach, we began by creating word-based embeddings on the basis of two glossaries of Buddhist terms (; ). This allowed us to scan each sentence in our texts for Buddhist terms listed in these glossaries, prioritising longer sequences of characters. Once the Buddhist vocabulary was identified, the remaining sequences not found in the glossaries were parsed into words using a Classical Chinese tokeniser (see ). Because this word-based tokeniser introduced significant noise into our downstream tasks, we tested two other tokenisation approaches: a character-based approach that treats individual characters as tokens, and a hybrid approach that uses the word-based tokenisation described above, but which parses sequences not found in the glossaries simply as individual characters (i.e. without using the Classical Chinese tokeniser). We also enhanced the dictionaries, using more advanced glossaries by Karashima Seishi (, , ) for our first test, which we will refer to as ‘Hybrid 1’, and an even further extended dictionary including the Da zhidu lun () glossary which we will refer to as ‘Hybrid 2’.

On the Tibetan side, tokenisation was converted to a syllable-tagging and recombination task with the ACTib scripts developed by Meelen et al. (). As for sentence segmentation, we could use the technique developed by Meelen and Roux () and optimised by Faggionato, Hill, and Meelen () to create sentence boundaries in Tibetan, which is good, but not 100% accurate. Existing automatic aligners rely on sentence boundaries, so accuracy is of crucial importance. Another issue that arises in this context is the difference between the Chinese and Tibetan texts we focus on specifically, as there are often multiple Tibetan sentences corresponding to one sentence in Buddhist Chinese. For these reasons, our procedure is solely based on semantic textual similarity, thereby bypassing the need for sentence boundaries altogether.

2.2 Developing Embeddings

There are many ways to acquire useful vector representations of words, known as word embeddings, which in turn can be used to aid downstream tasks like text classification, stylometric analysis, sentiment analysis, and, crucially for us, information retrieval, and its specific application in automatic textual alignments. These ways range from the straightforward count vector models that simply track word frequency across a corpus, to more advanced algorithms like Google’s Word2Vec and Facebook’s FastText, which use neural networks to develop models that can predict words based on a set of context words (continuous bag of words, or CBOW), or that can predict context words when given an input term (skip-gram). State-of-the-art word representations can be attained using transformer-based algorithms like BERT () and ERNIE (Zhang et al., 2019), which learn word representations by predicting masked words. In our procedure, in order to balance sophistication against complexity, we have elected to use FastText to create the embeddings that will drive our approach.

In addition to selecting the most adequate embedding method, it is essential to choose the most appropriate textual corpus as a basis for the embeddings. Since our goal was to create an embedding model that will be useful for the specific goal of aligning Chinese and Tibetan Buddhist translation texts, we chose a corpus that contains just the type of language that is specifically used in these texts. This is essential because the idiom and style of Buddhist texts is usually markedly different from that used in the broader language as a whole. Accordingly, for Chinese, we used Buddhist texts contained within the Kanseki repository, encompassing the Taishō edition of the Chinese Buddhist canon and a variety of supplementary materials, for a total of 4,137 documents containing 174m characters (20,775 unique). For Tibetan, we used the sūtra translations in the Kangyur (the electronic Derge version of the eKangyur collection), as well as electronic versions of commentarial and other texts in the entire eTengyur to create a corpus that is large enough to create word embeddings. The eKangyur consists of around 27 m tokens and the eTengyur consists of around 58m tokens (see ); these together represent 31k unique tokens.

Because we are attempting to develop a system that is not dependent on a priori knowledge of which Chinese text ‘should’ align with which Tibetan text, we trained two separate embeddings, one on the Chinese Buddhist texts, and one on the Tibetan. That is, we took each corpus independently and fed the corpora into the FastText algorithm with the same settings, creating two independent spaces of 100 dimensions each. We then projected the resulting embeddings into the same space, creating a combined embedding space, discussed in Section 2.3.

2.3 Combining Embeddings

For creating the combined embedding space, we adopted the approach of Glavaš, Franco-Salvador, Ponzetto, and Rosso (), which is in turn an implementation of the linear translation matrix approach suggested by Mikolov, Le, and Sutskever (). In effect, our method takes an embedding space for each language and then relies on a bilingual glossary to create a linear projection. This projection casts the two spaces into a shared space, one which preserves internal linguistic similarity while trying to bring the glossary terms as close together as possible. Using the two embedding spaces created in the previous step, we can then apply the aforementioned Yokoyama-Hirosawa and Inagaki glossaries, which provide Chinese and Tibetan translation pairs. We then identify every pair for which we have an embedding in both Chinese and Tibetan and use all these pairs together to create a projection into a shared embedding space.

In cases where the translation glossary includes a multi-character Chinese term not found in the embedding space, but where all constituent characters are present, an embedding is derived by averaging the vectors for all the characters within the word. We can glean some insight into the quality of the new shared embedding space by looking at the cosine similarity between known translation pairs from the glossaries, as shown in Table 1.

Table 1

Summary of cosine similarity scores of Tibetan-Chinese glossary pairs within the new embedding spaces according to Chinese tokenisation method. Shows the highest scoring pair, lowest scoring pair, and some descriptive statistics. Higher scores with lower standard deviation indicate a more accurate embedding space.

| CHINESE EMBEDDING TYPE | MOST SIMILAR | LEAST SIMILAR | MEDIAN | MEAN | STD |

|---|---|---|---|---|---|

| Character | 0.9 | –0.2 | 0.66 | 0.64 | 0.12 |

| Hybrid1 | 0.9 | 0.19 | 0.66 | 0.65 | 0.11 |

| Hybrid2 | 0.91 | 0.22 | 0.66 | 0.64 | 0.11 |

| Word | 0.92 | 0.3 | 0.67 | 0.67 | 0.11 |

The results listed in Table 1 show that the different Chinese tokenisation approaches used lead to different rates of similarity in the shared embedding space. For word-based embeddings and to a lesser extent ‘Hybrid 2’, these results also indicate that, in general, the larger the tokenisation dictionary, the higher the similarity. Although word-based tokenisation performs slightly better at this initial step, it does not work as well as the hybrid approaches for our downstream tasks, as shown in Section 3 below.

As a further sanity check, we visualised some embeddings to see whether similar words indeed exist in close proximity to each other. The resulting visualisation is presented in Figure 2, which demonstrates this for some sample vectors for animals, directions, numbers, and seasons. All these categories are nicely clustered together as expected. The only outlier is Tibetan nya sha, which was labelled as an animal, but it actually means ‘fish (as) meat’, i.e. fish that will be eaten. It is therefore not entirely surprising that it would be farther away from the rest of the animal words, which are not used as food. Figure 3 is a zoomed-in view of the “animal” cluster from Figure 2, with English translations for the vectors. This zoomed-in view shows that Tibetan and Chinese equivalents are placed relatively close together, as expected.

A sample of embeddings selected from the cross-lingual Tibetan-Chinese space. This includes a selection of animal, numerical, seasonal, and directional words.

A zoomed in detail of some of the animal words from the cross-lingual embedding space shown in Figure 1, including English translations.

There is room for improvement in the quality of the shared embedding space, but the real test is the space’s utility for the task at hand, which is identifying textual sequences with similar semantic meaning across languages.

2.4 Identifying similar sequences

With the combined and checked word embeddings in hand, we are ready to apply our procedure to what has been the main goal all along, i.e. searching for sequences of text in both Tibetan and Chinese that carry similar meanings. In this pilot study we use as our source texts three Chinese sūtras from the Mahāratnakūṭa (MRK) collection, which have been manually divided into sections. We then tokenise each section into either characters, words, or Buddhist terms (as in our two Hybrid embedding approaches). Then we fetch the vector for each token in the section and average the vectors together to create a vector representation of the entire section. We then define Tibetan texts parallel to the Chinese sūtras as the ‘target.’ We divided this target text into sections as well: we did this by using a sliding window of text from a Tibetan candidate document, the length of which window is based on the length of the Chinese section, adjusted by some length factor. We then calculate the cosine similarity between the Chinese section in question and all Tibetan sections. Finally, we have the system rank the suggested results based on highest cosine similarity of the combined embeddings, and report the results. The highest-scoring sections are likely to have similar meaning.

2.5 Parameter settings, Clustering & Optimisation

When we looked closely at the generated results, we found that we could improve their quality by optimising the test parameter settings, specifically the length of the Tibetan search window. One reason why such optimisation proved advantageous may be the fact that the Tibetan text is always more elaborate than the Chinese, meaning that for every Chinese passage of n tokens, the parallel Tibetan will include roughly 50% more tokens. In order to accommodate this difference, we extended the Tibetan search window by a fixed rate (proportional rates proved inefficient, hence we rejected them), in order to ensure the results would cover the entire Chinese input. Significantly shorter Chinese input phrases required a different rate still, since they tend to be proportionally even longer in Tibetan than are longer Chinese phrases. In Section 3.3 we discuss the parameter options to optimise results for different input lengths.

2.6 Sample output

Figure 4 shows an excerpt of a sample output file with the Chinese input (shown in line 1), the Tibetan target (shown in line 2), further information on location, ranking, similarity scores, etc. as well as the clustered outputs and information on how well they fit with the target. Alignments are identified by their unique alignment codes, e.g. ‘T2.A1’ refers to ‘Alignment number 1 in Text 2’. A complete overview of all manual alignments used for evaluation (see Section 2.7) can be found in the Supplementary Files.

Sample output for Alignment T2.A1.

2.7 Evaluation method

Our alignment outputs automatically receive similarity scores, which allows them to be automatically ranked. This in turn is useful to philologists, as it allows for displaying any number of ‘top’ alignments, depending on the task at hand (e.g. top 5, 10 or 15). In order to evaluate our automatic Chinese-Tibetan alignment outputs, we compared them to a manually-created gold standard. This gold standard refers to a set of data produced by expert philologists, who manually aligned three of our source and target texts and provided alignment scores based on machine translation evaluation techniques.

Producing these manual alignments was a non-trivial task, for two reasons. First, while nominally speaking the Chinese and Tibetan texts in question are translations of the same Indic Buddhist scripture, in no case can we assume that the two were in fact translated from the same original source in Sanskrit or another Indic source language; indeed the two texts in each pair often differ from each other strikingly, in some cases entirely. Second, the very process of manually scoring the proposed alignments, with the aim to identify ‘near-perfect’ pairs, is also to a considerable degree subjective, so much so that even experienced philologists with excellent knowledge of both languages can differ in judgement.

In order to mitigate both of the problems listed above, we created a detailed annotation and scoring guide, with diagnostics and precise decision-making criteria, as well as examples. In addition, we had a random number of alignments double-checked by multiple annotators, in order to check for consistency. All in all, the philologists identified 80 near-perfect alignment pairs for three Chinese input texts and their corresponding Tibetan targets (42 for Text 1; 21 for Text 2; 17 for Text 3). These 80 alignments constituted our gold standard, which we used in testing the effectiveness and accuracy of our procedure. This manually-developed gold standard is available for only three Chinese texts and their Tibetan counterparts at present, which is why we focus on these three pairs of texts only in the evaluation of this pilot study. The three texts in question are:

- Xulai jing 須賴經 (T329), from the late 3rd-early 4th century, and the Des pas zhus pa (D71), from ca. the late 8th century—translations, into Chinese and Tibetan respectively, of the *Sūrata-paripṛcchā (henceforth ‘Text 1’)

- Genghe shang youpoyi hui 恒河上優婆夷會 (T310 [31]), from the early 8th century, and the Gang ga’i mchog gis zhus pa (D75), roughly a century later–translations of the *Gaṇgottarā-paripṛcchā (henceforth ‘Text 2’)

- Shande tianzi hui 善徳天子會 (T310 [35]), from the early 8th century, and the Sangs rgyas kyi yul bsam gyis mi khyab pa bstan pa (D79), roughly a century later, translations of the *Acintyabuddhaviṣaya-nirdeśa (henceforth ‘Text 3’)

All three texts survive in their entirety only in the Chinese and Tibetan translations, with no known complete Sanskrit or other Indic language versions, they also differ in many ways. One of these ways is especially consequential for our results: Text 1 is mainly narrative, and consists of stories that illustrate moral points, while the latter two are more abstract-philosophical, and contain a narrower set of more technical metaphysical concepts. We weigh the implications of this difference in Section 3.1. For this pilot study, we use the Chinese sentence as input and let the system find Tibetan equivalents that are semantically as similar as possible, ideally capturing the exact target that the philologists identified in the gold standards.

3 Results

In this section we present the results of using the different methods of creating Buddhist Chinese embeddings described above in Section 2.2. As these embeddings were not yet optimised, a comparison of the effectiveness of the different methods when applied to each of our three texts can give us further insight into which method is best suited for the task at hand. Tibetan word embeddings were already optimised (see ), including the addition of specialist (Buddhist) terms. In the remainder of this section, we first present the aggregate results per text, and then zoom in on select ‘interesting’ results in order to discuss how they may have been affected by the different embedding methods used, as well as by the unique characteristics of the inputs qua vocabulary, style, and grammar.

3.1 Results per text

Table 2 shows what percentage of outputs for each text was ranked first or in the top 5/10/15; a separate listing is given for each of the four Chinese embedding methods. Ideally, the system would automatically rank the exact Tibetan target ‘first,’ so that philologists can instantly find the Tibetan equivalents of the Chinese inputs they are looking for. However, since this is not likely to happen always, or even frequently, a dedicated user interface for philologists should display the top 5/10/15 (depending on preference), which the user would then go through by hand. For this reason, we list not only the percentage of target alignments that were automatically ranked first, but also those where the target was found in the top 5/10/15, as well as the average ranking of the target result and the number of cases in which the target alignment in Tibetan was not found in the top 15 (i.e. ranked ‘zero’).

Table 2

Results for all texts with four embedding methods for the Chinese input.

| TEXT – CHI. EMBEDDING TYPE | % RANK1 | %RANK5 | %RANK10 | %RANK15 | AV. RANK | #ZERO |

|---|---|---|---|---|---|---|

| Text 1 – Character | 30.95 | 69.05 | 78.57 | 92.86 | 4.33 | 2 |

| Text 1 – Hybrid 1 | 35.71 | 69.05 | 88.1 | 92.86 | 3.56 | 0 |

| Text 1 – Hybrid 2 | 40.48 | 73.81 | 90.48 | 95.24 | 3.4 | 0 |

| Text 1 – Word | 38.1 | 61.9 | 76.19 | 85.71 | 3.92 | 2 |

| Text 2 – Character | 76.19 | 100 | 100 | 100 | 1.24 | 0 |

| Text 2 – Hybrid 1 | 52.38 | 100 | 100 | 100 | 2 | 0 |

| Text 2 – Hybrid 2 | 61.9 | 100 | 100 | 100 | 1.57 | 0 |

| Text 2 – Word | 42.86 | 95.24 | 100 | 100 | 2.48 | 0 |

| Text 3 – Character | 35.29 | 47.06 | 52.94 | 70.59 | 4.58 | 1 |

| Text 3 – Hybrid 1 | 35.29 | 64.71 | 82.35 | 88.23 | 3.53 | 0 |

| Text 3 – Hybrid 2 | 35.29 | 58.82 | 82.35 | 82.35 | 3.36 | 0 |

| Text 3 – Word | 11.76 | 52.94 | 70.59 | 70.59 | 3.92 | 2 |

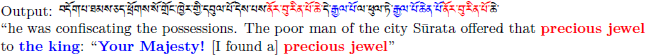

Table 2 shows that the results for Text 2 are always better than those for Text 1 and Text 3: the average rank is higher (ranging from 1.24 with Character embeddings to 2.48 for Word embeddings); there are no zero results with any of the embedding methods used; and it has the highest percentage of perfectly matched target results in the top ranks (with almost all targets found in the top 5 with any embedding method). In practice, this means that philologists inputting Chinese passages from Text 2 are very likely to be presented with exact Tibetan targets (i.e. semantically similar passages or target alignments as identified manually by philologists) when searching the entire text. The results for Texts 1 and 3 are not as outstanding, but are still very good, with average ranking between 3.3–4.6 (as against 1.2–2.4 for Text 2). Still, for both Text 1 and 3, we came across some problematic cases in which the system found no Tibetan equivalent in the top 15 of the ranked results, as well as ones in which the Character-embedding method yielded zero results. These problematic cases are particularly interesting to us: by looking at what went wrong we may understand how to improve our system. One example of such a problematic case with a ‘zero result’ is Alignment 20 in Text 1 (T1.A20), as shown in example 1. The highest-ranked match for this input based on Character-embeddings is shown in 1c.

- (1)

- (a)

- (b)

- (c)

The system ranked 1c first, suggesting that it matches the input very closely, while even a quick look reveals that this is not at all the case. The colour coding in the examples shows, however, that the highest-ranked output contains multiple matches for a number of individual key terms present in the input, such as ‘your highness/majesty’, ‘wealth/precious jewel,’ etc. Although meaning-wise, the high-ranked output suggested by the system differs from the input, these key terms do occur multiple times in both. This latter fact may have contributed decisively to the relatively high cosine similarity score of 0.90184 (standard deviation of similarity score: 0.02, avg similarity score 0.85 for this alignment). We may add here that this problem seems to persist also with other embedding methods: for instance, for Hybrid-2-embeddings, the highest-ranked result is an altogether different passage of the text than the one discussed immediately above, but this one too contains the very same crucial key terms  ‘Your Majesty’ and

‘Your Majesty’ and  ‘precious jewel’ multiple times.

‘precious jewel’ multiple times.

In addition to such individual cases, we also need to account for the differences in the quality of results between our three texts. One of the reasons for these differences may be the fact that Texts 1 and 3 are much longer than Text 2 (Text 1 has 4,463 Tibetan tokens; Text 2 has 2,484, and Text 3 has 10,930), while at the same time the individual Chinese inputs for Test 2 are much shorter, which is a reflection of both the internal features of the text, and of the personal preferences of the philologist who aligned it. Generally, the longer the text, the more difficult it is to rank the target match first (especially when the input passages are short), simply because there are many more competing matches than there are in a shorter text. We will discuss this further in Section 3.3 below. Another reason might be the subjectivity of the manual alignments, which depend to some extent on the discretion of the philologist, as mentioned before. In addition, currently our only measure for evaluating the accuracy of the results in this pilot study is the ranking of the target Tibetan. This ranking is, however, not always entirely reliable, and it can be easily influenced (or distorted?) by a number of factors, e.g. text length, how repetitive/diverse the content is, etc. One important aspect our current evaluation metric disregards is how closely non-target results with high cosine similarity scores reflect the semantic content of the input. Although we currently do not have the required data to evaluate this automatically, we will shed some light on this in Section 3.5.

3.2 The effect of different Chinese embedding methods

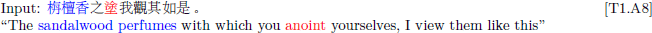

One variable parameter in our results consists of the different methods of creating Chinese embeddings, as described in Section 2.2 above. ‘Hybrid 2’ embeddings are essentially ‘Hybrid 1’ embeddings extended with additional Buddhist terms from the Da zhidu lun glossary. Therefore, whenever ‘Hybrid 2’ embeddings yielded better results for certain alignments than did Hybrid-1-embeddings, we expect this is because these alignments contain terminology that is only found in the Da zhidu lun glossary. One clear example of this is Alignment 21 in Text 1 (ranked first with Hybrid 2, but sixth with Hybrid 1). This alignment contains 如來 ‘Tathāgata’ which, among the glossaries we used, is only found in the Da zhidu lun glossary, and not in the Karashima lists upon which the ‘Hybrid 1’ embeddings were based. This example is shown in 2, along with its Tibetan target:

- (2)

- (a)

- Input: 佛告須賴言族姓子有四法具足受持若族姓子族姓女見如來者審見善見 [T1.A21]

- “The Buddha said to Sūrata: “Son of good family! There are four things that, if a son or daughter of good family should possess them and uphold them, will allow them to see the Tathāgata, to completely see him, to see him well.”

- (b)

- Target

- “The Lord said: “Sūrata! A son of good family by possessing four things sees the Tathāgata.”

Figure 5 shows the results (up to top-10 ranks) from Table 1 in a chart organised by type of Chinese embedding. Though this pattern of superiority of Hybrid-2 over Hybrid-1 embeddings is expected and indeed quite common in our results, we also found one counterexample to it, namely the short Alignment 11 in Text 2 (shown in 3). In this case, Hybrid-1 performed best (target ranked 5th), while Hybrid-2-embeddings had the target ranked 11th. This is unexpected, because the input contains 攀縁 ‘in accordance with conditions,’ which is found in the Karashima lists, but not in the Da zhidu lun glossary. This means that this particular term was included in both Hybrid-1 and Hybrid-2 embeddings and there must be another, as of yet unidentified, reason why the Hybrid-1 embeddings yield a better result here.

Top-ranked results for each Chinese embedding method by text.

- (3)

- (a)

- Input: 恒河上言: 如來豈以有所攀縁, 而致斯問 [T2.A11]

- “Gaṅgottarā said: “Surely the Tathāgata does not ask this question because he has [something] which could be referentially objectified, does he?”

- (b)

- “She asked: ‘Does the Blessed One say this because the question is endowed with a referential object?’”

Another category of results consists of those in which Character embeddings performed best. In these cases we expect to be dealing with inputs that contain few multi-character proper nouns and specialist Buddhist terms, which is indeed the usual pattern. Nonetheless, we found a number of exceptions, e.g. Alignment 12 of Text 2. The input here does contain some technical multi-character terms (世尊 ‘Bhagavān’, 能知 ‘knowable’ and 能得 ‘graspable’). This might lead one to expect that Hybrid embeddings would perform best. This, however, is not the case: Character embeddings proved superior. The reason for this is not entirely clear, although it may have something to do with the fact that all the terms listed above also make sense if they are split up into single characters (‘world-honour,’ ‘able-know,’ ‘able-grasp’ respectively). A similar explanation can be offered for Alignment 33 in Text 1 (ranked 14th with Char vs 24/35th with Hybrid-1/2 embeddings), so this phenomenon does not appear to be text-specific. Other cases of better performance of Char-embeddings include:

- Text 1: Alignments 27 (ranked 2nd with Char vs 4/7th with Hybrid-1/Word) and 32 (ranked 2nd with Char vs 7th in Hybrid-1 and Word);

- Text 3: Alignments 12 and 15 (both ranked 1st with Char vs 3rd/4th with Hybrid-1/Word), and also 7 (ranked 8th with Char vs 19/36th with Hybrid-1/Word), 13 (ranked 1st with Char vs 3rd/6th with Hybrid-1/Hybrid-2) and 14 (ranked 1st with Char vs 6th/3rd with Hybrid/Word).

Some of these cases are especially difficult to interpret. For instance, Alignments 27 and 32 of Text 1 contain multi-character proper names, like 波斯匿 ‘Prasenajit.’ These are expected to pose difficulties for Char-embeddings, for, while they can be read as individual characters, this would result in jibberish: 波-斯-匿 is ‘wave-this-conceal.’ Similarly, Alignments 12 and 15 of Text 3 contain the long phonetic transcription of a Sanskrit name, 文殊師利 ‘Mañjuśrī’, which, if read as individual characters, would make little sense (‘literature-distinct-teacher-benefit’), and which therefore can only be ‘misleading’ for alignment purposes. As for Alignments 7, 13 and 14 of Text 3, the fact that Char-embeddings performed best may be related to the fact that the inputs are extremely short, consisting only of max 7 characters (see Section 3.3). These types of unexpected examples form a minority, however, and while further analysis of such cases is a desideratum, it can only be performed at a later stage, using a larger dataset. Overall, we can conclude that in the three texts we have investigated for this pilot study, the enhanced Hybrid-2 embeddings generally perform better for alignments that contain specialist Buddhist terminology, and that in the absence of such terminology, Char embeddings perform equally well or better, which is exactly what we expected.

3.3 The effect of input length

Some texts exhibit a relatively high degree of repetition of short, generic clauses. This presents a challenge for the alignment procedure as it is unclear which passage is the target identified by philologists if multiple passages with very similar meanings are present in the text. This problem pertains especially to Texts 2 and 3, where aligned segments are relatively short. Especially in Text 3, we have short recurring inputs like ‘X said’, e.g. Alignment 7 with input 諸比丘言 ‘all the monks said’ (ranked 8th) or Alignment 11 with input 汝等應知 ‘you all should know’ (partial match ranked 12th, because the Tibetan target contains an additional vocative ‘friends!’  ). While short inputs pose challenges to our procedure, very long inputs usually lead to good results. One example of this is Alignment 10 in Text 3, which contains a very long tantric incantation. As input length clearly affects our results we included the option of adjusting several minor parameters in order to improve the results of variable input lengths as follows:

). While short inputs pose challenges to our procedure, very long inputs usually lead to good results. One example of this is Alignment 10 in Text 3, which contains a very long tantric incantation. As input length clearly affects our results we included the option of adjusting several minor parameters in order to improve the results of variable input lengths as follows:

- The proportion by which to adjust long phrases (as they are generally longer in Tibetan than in Chinese);

- The proportion by which to adjust short phrases (as short Chinese phrases are often significantly longer in Tibetan);

- The length threshold for what constitutes a “short phrase”;

- How far apart results can be clustered together in the final analysis (results within n words of each other get reported as a single result).

Of all these minor parameters, we observed that the greatest impact on the results could be generated by adjusting the parameters for long and short phrases. This is most clearly seen in examples from Text 2. Text 2 has the longest input alignments in general (with a median length of 21 characters; Text 1 has a median of 12.5, and Text 3 a median of 10), and Alignments 4, 6 and 15 of this text demonstrate the importance of adjustments according to phrase length. With the new settings of a 50% increased adjustment length for short phrases from Chinese to Tibetan, instead of the much longer, 130%/140%/160% options we tested before, the rankings of results improved significantly (ranking improvement of 14 → 3 for Alignment 4; 11 → 2 for Alignment 6 and 6 → 2 for Alignment 15). For some alignments, however, reducing the phrasal length settings resulted not in higher rankings, but in lower ones, although these differences were much smaller than the gains observed for the other alignments (ranking 1 → 3 for Alignment 1; 1 → 2 for Alignments 10 and 17). Our current corpora are too small to justify any generalisations here. However, based on the results of our pilot study we can conclude that it is certainly worthwhile to allow for the adjustment of additional parameters, and that the most optimal settings are a function of input length and content (i.e. how common the key terms of the input are and how often they reoccur in the text).

3.4 The effect of manual annotation

One limitation of the current pilot study lies in the manual annotation: the alignment scores of each of our texts were added by three different philologists. For Text 1, we asked the same annotator to provide scores for his alignments on two different occasions, at least 1 year apart. We observed that some alignments he had at first identified as perfect equivalents (score 5), were scored 4 in the second round of manual annotation. This shows the important issue of subjectivity in manual scoring. This issue can only be effectively addressed through rigorous and repeated large-scale inter-annotator agreement checks. However, at present such checks are almost impossible for logistical reasons: they require time- and labour-intensive participation of multiple philologists who are experts in both classical languages as well as in the highly complex Buddhist content of the texts, and such participation is extremely difficult to secure. In view of this, while in future work we hope to include at least partial inter-annotator agreement scores, in the present pilot study we had to settle for the sub-optimal single-scored method.

3.5 Measuring the success of actual semantic similarity

Alignment 20 from Text 1 illustrated in example 1 above already showed that frequently-occurring key terms could have a negative impact on ranking: whenever key terms occur repeatedly, the chances of multiple outputs with high cosine similarity scores increase, and the chances of a high ranking for just one specific output (corresponding to the target) decrease. In this section we briefly demonstrate that although lower rankings may initially indicate a bad result, this does not necessarily mean that our system is performing badly: high-ranked outputs may not be the exact target (as identified by expert philologists in our gold standard), but they could still convey the same or a very similar meaning. We can see this in particular for alignments where the average cosine similarity results are low. Consider, for example, Alignment 8 from text 1:

- (4)

- (a)

- (b)

- (5)

The average cosine similarity of this alignment with the Hybrid-2 embeddings is only 0.80 (standard deviation of 0.02). The target is ranked 2nd with a cosine similarity of 0.85807, but the highest-ranked output shown in (5) scored 0.88005. The color coding shows that this output contains two of the key terms present in the Chinese input. Since the Chinese input is relatively short, overlap in two such highly specific terms can yield relatively high similarity and thus lead to a highly-ranked result.

4 Conclusion

In this paper we presented the first-ever procedure for identifying highly similar sequences of text in Chinese and Tibetan translations of Buddhist sūtra literature. Our pilot study is based on creating a cross-lingual embedding space by taking the cosine similarity of average sequence vectors in order to produce unsupervised similar cross-linguistic parallel alignments at word, sentence, and even paragraph level. We evaluate the results of the pilot study comparing three Buddhist texts that are manually aligned by expert philologists. Initial results show that our method lays a solid foundation for the future development of a fully-fledged Information Retrieval tool for these (and potentially other) low-resource, historical languages. We will address questions of scalability and of further philological use cases in future research.

Supplementary Files

Supplementary materials are deposited on Zenodo:

- Alignment Scoring Manual (): https://doi.org/10.5281/zenodo.6782150

- Buddhist Chinese embeddings (): https://doi.org/10.5281/zenodo.6782932

- Classical Tibetan embeddings (): https://doi.org/10.5281/zenodo.6782247